5 AI Concepts I Wish Someone Had Explained to Me Earlier

"The 5 AI terms I wish someone had explained to me like a normal person."

Hey friends 👋

When I first started playing seriously with AI, I kept running into these strange words:

Context windows. Tokens. Fine-tuning. RLHF...

Everyone seemed to know what they meant — except me.

The problem?

Nobody was breaking it down in normal language. Just endless tech-speak.

So today I want to fix that.

Here’s a short, simple guide to 5 core AI concepts that will help you understand what’s actually happening inside these models — and make you sound 10x smarter at your next AI conversation.

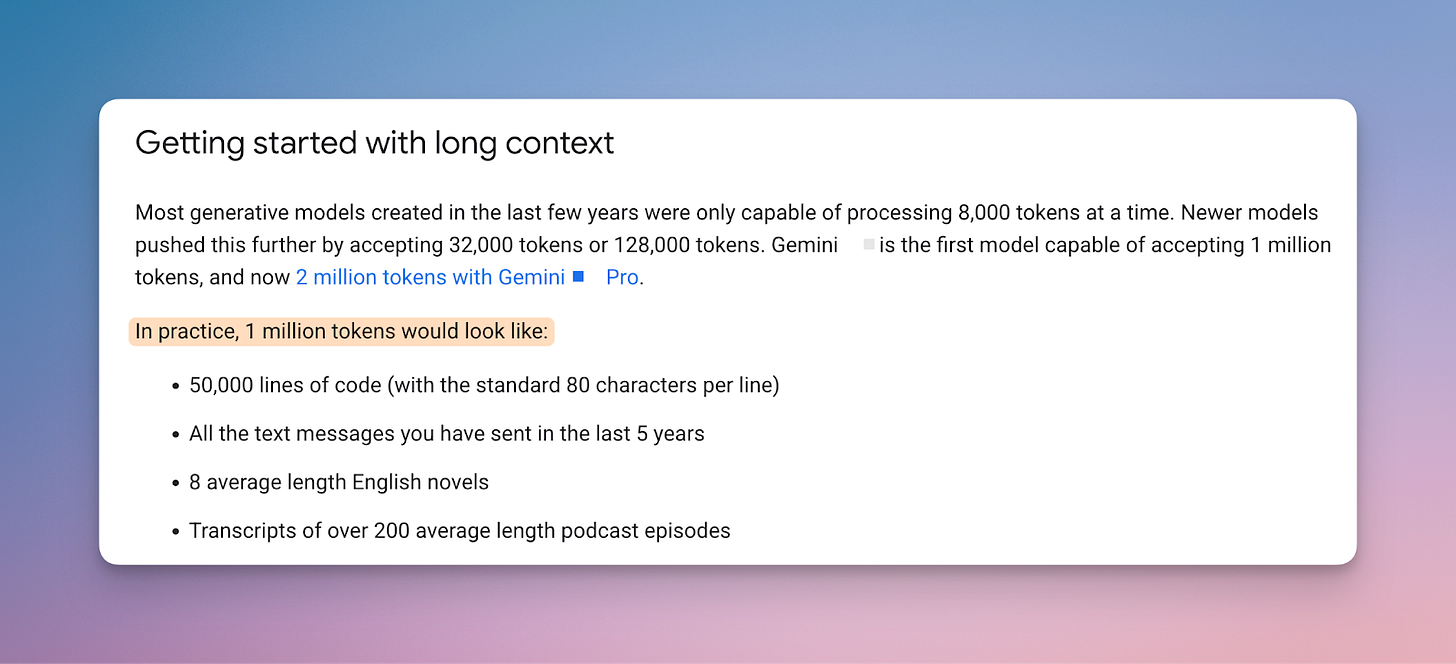

1️⃣ Context Window — How Much the AI Can "Remember"

Every AI model has a kind of short-term memory called a context window.

The bigger it is, the more information it can process at once.

Imagine this:

Small window = quick chat about where to have dinner.

Big window = deep dive into your 10-year business plan.

Tools like Gemini or Claude 3 have huge context windows, which means they can handle giant documents, full books, or huge conversation threads without getting lost.

Why this matters:

If you're summarizing books, analyzing contracts, or feeding huge datasets into AI — pick a model with a large context window. It’s one of the biggest factors in getting good outputs.

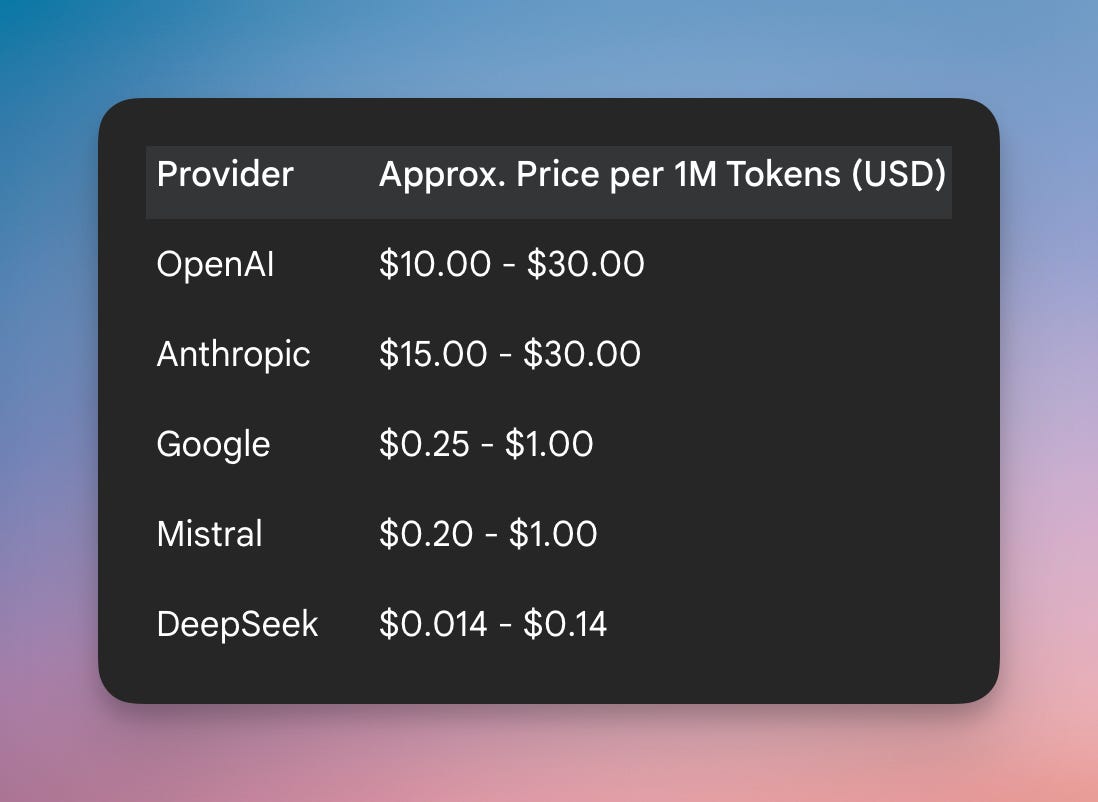

2️⃣ Tokens — The Currency of AI

AI doesn’t see words the way we do.

It breaks text into smaller units called tokens — often little chunks of words.

Example:

The word “playing” becomes “play” + “ing” — two tokens.

Why this matters:

Almost every AI tool charges you based on token usage. Both your input and the AI’s output consume tokens.

Longer prompts? More tokens. Longer responses? More tokens.

Hit your token limit, and you might get cut off mid-response.

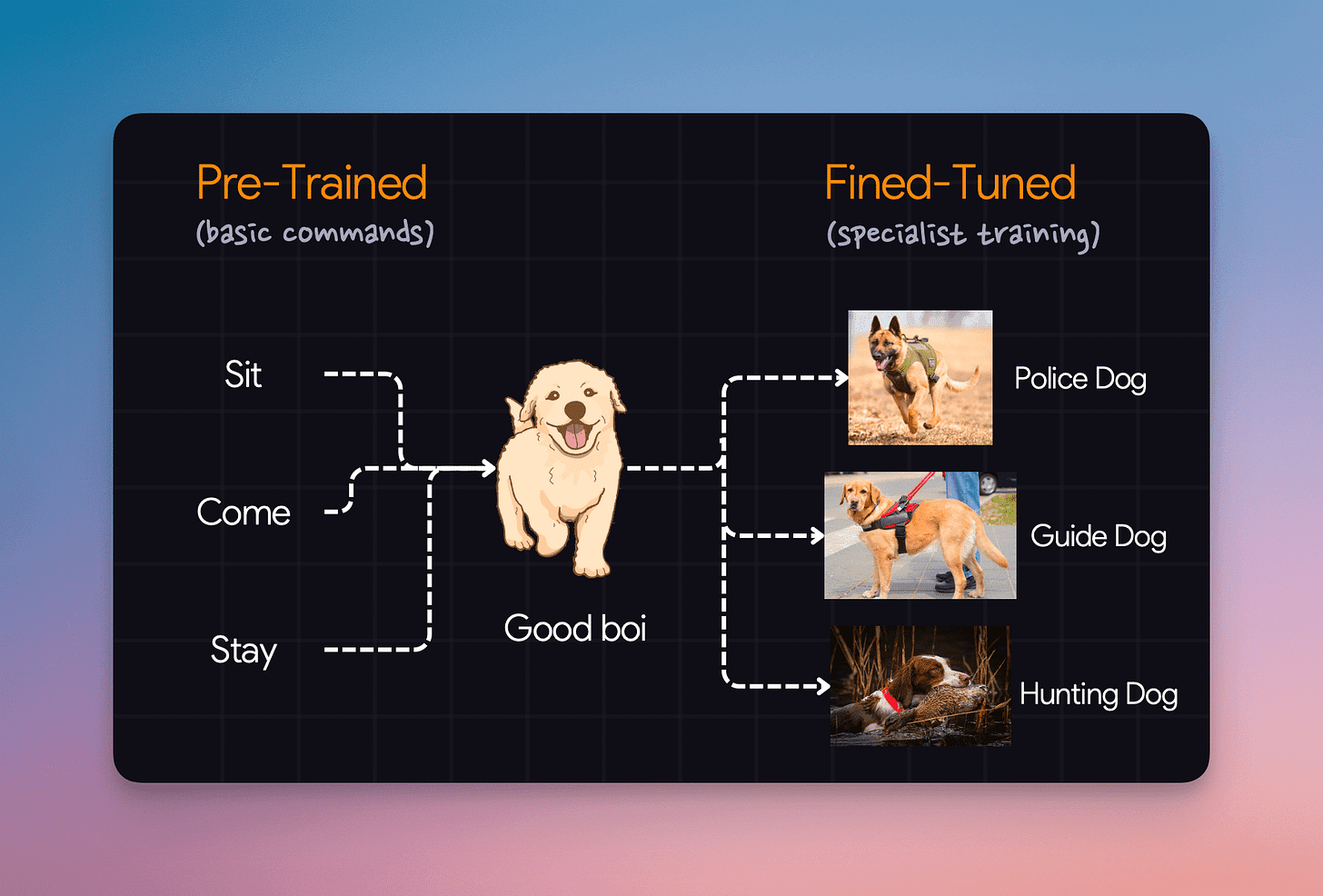

3️⃣ Fine-Tuning — Custom Training for Specific Jobs

Most big AI models are trained on huge, general datasets (like all of the internet). But sometimes you want them to specialize — that’s where fine-tuning comes in.

Think of it like this:

You hire a smart intern (the base AI model), but then you train them specifically for your business using your internal documents.

Example:

Hospitals fine-tune medical models on patient records.

Law firms fine-tune models to review contracts.

Startups fine-tune models to handle customer support.

Why this matters:

You don’t have to fine-tune models yourself, but knowing this explains why some niche AI tools massively outperform general chatbots for very specific use cases.

4️⃣ Latency — How Long You Wait for a Response

Simple but important:

Latency is how long the AI takes to respond after you hit "submit."

Example:

When ChatGPT pauses for a few seconds before replying — that’s latency.

Why this matters:

Low latency = fast, natural conversations.

For real-time apps (think voice assistants, live chat, or translation), low latency makes the experience feel smooth instead of frustrating.

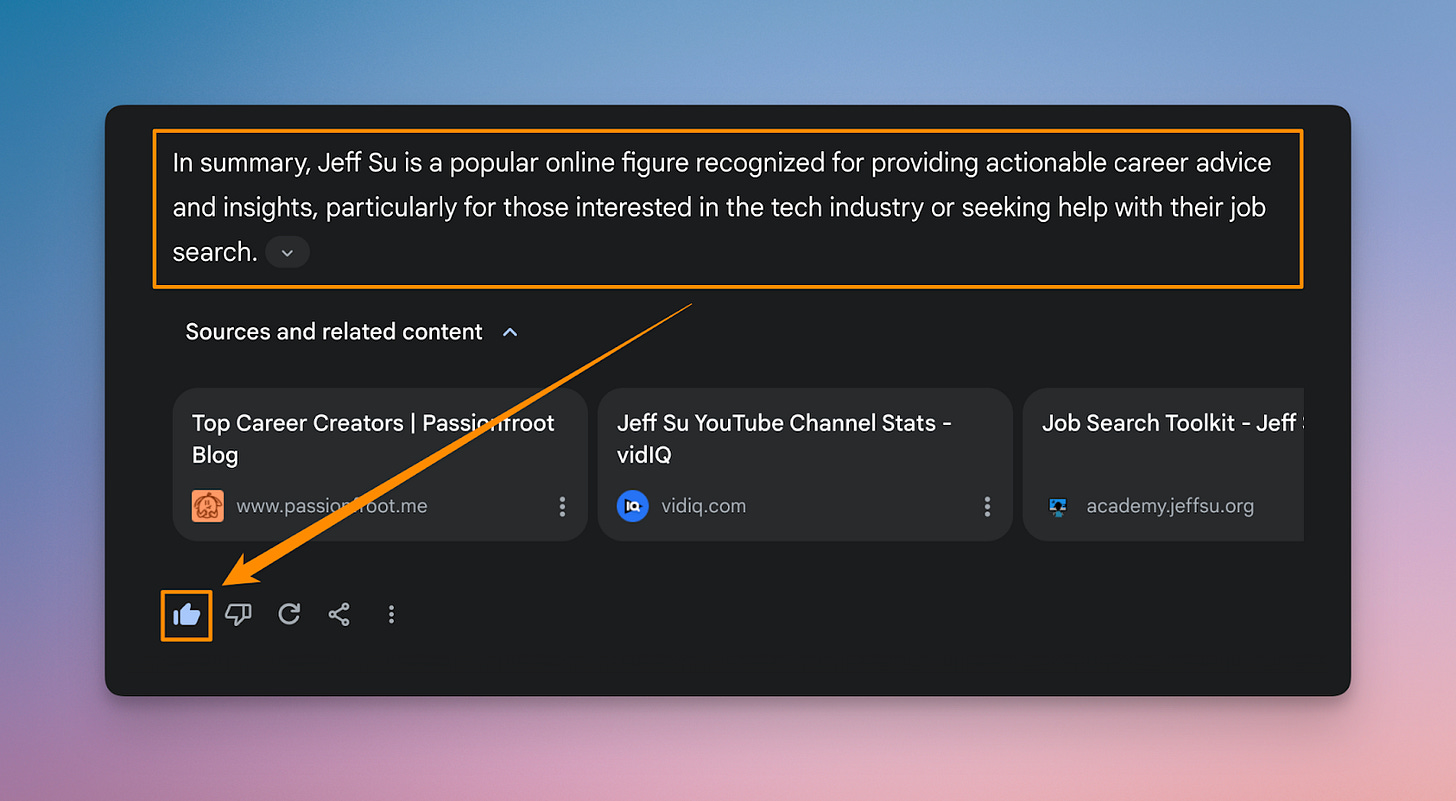

5️⃣ RLHF — How You’re Actually Helping Train AI

RLHF stands for Reinforcement Learning from Human Feedback.

(A mouthful, I know.)

In simple terms, this is how AI gets better: humans rate the AI’s answers, and the model learns from that feedback.

Example:

When you thumbs-up or thumbs-down a ChatGPT response, you’re directly contributing to its training.

Why this matters:

Every time you give feedback, you’re literally helping shape the next version of these models. That’s how AI keeps getting better.

Wrapping It Up

AI can feel like a black box. But once you peek inside, the core concepts are actually pretty simple.

Context window = how much it can handle at once.

Tokens = how it counts your words.

Fine-tuning = custom job training.

Latency = response speed.

RLHF = learning from your feedback.

The more you understand how the engine works, the better you'll drive it.

If this was helpful — hit subscribe, share with a friend, or reply and let me know what other AI topics you'd love to see explained. 🚀