AI is Outgrowing Earth: 5 Shocking Truths About Building Data Centers in Orbit

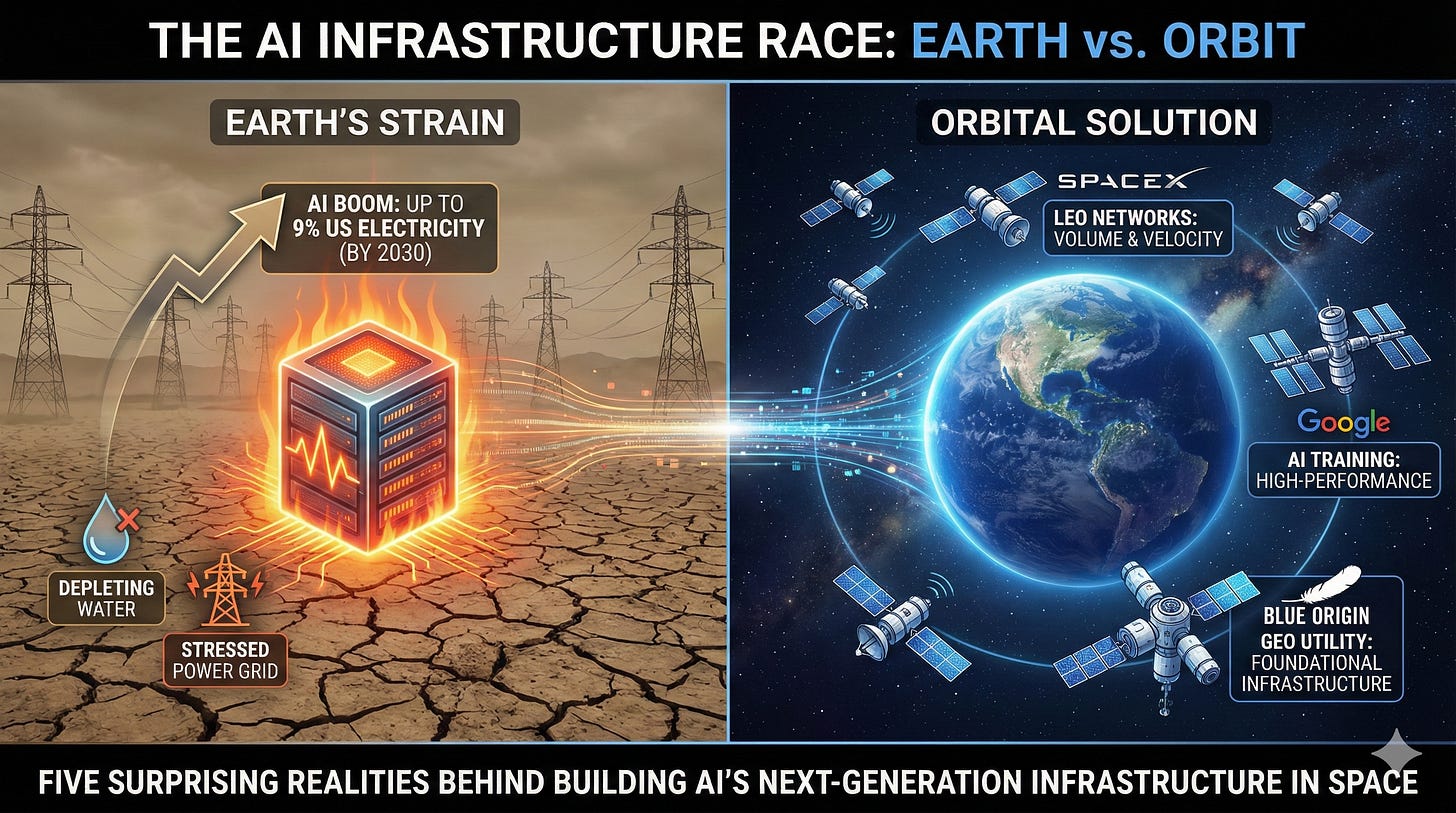

The engine of artificial intelligence is threatening to overheat our planet. The massive data centers that power the AI boom are consuming electricity at a staggering rate, with projections suggesting they could account for up to 9% of total U.S. electricity consumption by 2030. This relentless demand is creating an urgent infrastructure crisis, straining our power grids, land, and water resources.

In response, technology giants are pursuing a solution that sounds like science fiction but is now a strategic imperative: moving data centers into orbit. This is not a unified effort, but a competitive race with distinct strategies. SpaceX is focused on volume and velocity, aiming to launch vast low-Earth orbit (LEO) networks. Google is targeting specialized, high-performance AI training with its custom hardware. And Blue Origin is positioning itself as a foundational orbital utility provider, building the infrastructure to host this new ecosystem in geostationary orbit (GEO). This article reveals five of the most surprising realities behind this monumental undertaking to build AI’s mandatory next-generation infrastructure in space.

AI’s Thirst for Power Is Forcing an Off-World Migration

It’s Not Science Fiction, It’s an Energy Crisis

The primary motivation for building orbital data centers is not innovation for its own sake, but sheer necessity. The current trajectory of terrestrial AI compute is unsustainable. By moving this infrastructure off-world, we sidestep these limitations and gain access to the single greatest advantage of space: virtually unlimited and highly efficient solar power.

In an optimized “dawn-dusk” orbit, a solar panel receives near-constant sunlight, allowing it to be up to eight times more productive than an equivalent panel on Earth. This eliminates concerns over cloud cover, atmospheric scattering, and day/night cycles, providing the sustained, high-density power required for massive AI model training. As Google CEO Sundar Pichai articulated in a widely cited analysis:

“When you truly step back and envision the amount of compute we’re going to need, it starts making sense and it’s a matter of time.”

But harnessing this limitless power is meaningless without solving the fundamental physics problem of shedding the immense heat it generates in a vacuum.

The Vacuum of Space is an Engineering Paradox

The Biggest Challenge Isn’t Heat, It’s Getting Rid of It

One of the most counter-intuitive challenges of orbital computing is thermal management. While the deep cold of space acts as an ultimate heat sink, the vacuum that makes it so cold also prevents heat transfer via convection—the method used by fans and air conditioning on Earth. Without air, the entire thermal control system must rely on conduction to move heat and radiation to reject it into space.

This physical limitation is the defining technical constraint that dictates the entire architecture of an orbital data center. To dissipate the immense heat generated by power-dense AI chips, these facilities must be equipped with massive radiator surfaces. These passive thermal systems often use sophisticated Constant Conductance Heat Pipes embedded within Honeycomb Radiator Panels, which offer an “ideal combination of ultra-low mass, structural integrity and high thermal performance”—all critical in space. The sheer scale of the heat rejection systems needed to cool a gigawatt-scale facility leads directly to the next reality: these structures are simply too big to launch in one piece.

These Giants Won’t Be Launched, They’ll Be Built by Robots in Orbit

Orbital Data Centers Are Too Big for Any Rocket

The gigawatt-scale ambitions for orbital computing mean the final structures will be physically massive—far too large to fit inside the fairing of any existing rocket. The only viable solution is On-Orbit Assembly (OOA).

Orbital data centers will be constructed in space from smaller, modular, and mass-producible components launched on successive missions. These units, sometimes called “satlets”—like the Hyper-Integrated Satlets (HISats) from the DARPA Phoenix program—will be pieced together not by astronauts, but by semi-autonomous robotics. A prime example of this technology is the SSL Dragonfly concept, which uses a highly dexterous robotic arm to assemble satellite components. This marks a fundamental shift away from treating orbital assets as disposable, monolithic satellites and toward building them as adaptable, upgradable infrastructure designed to last for decades.

The Entire Vision Relies on a Single Breakthrough

The Whole Idea is Economically Impossible Without SpaceX’s Starship

The technical marvels of orbital data centers are irrelevant if the economics don’t work. The financial viability of this entire concept hinges on a radical reduction in the cost to launch materials into space.

According to economic analyses, the price to launch a kilogram to low-Earth orbit must fall to around 200perkilogram∗∗fororbitalfacilitiestobecomecost−competitivewiththeirterrestrialcounterparts.Toputthatincontext,achievingthispricepointisalmostentirelydependentonthesuccessoffullyandrapidlyreusableheavy−liftlaunchvehicles—specifically,SpaceX′sStarship.Theeconomicmodelsbecomeevenmorecompellingwhenyouconsidertheendgame:withfullreusability,Starship′stheoreticalinternalcostscouldplummettobelow∗∗15 per kilogram. This single data point makes the $200/kg target seem not just possible, but highly plausible, while also underscoring how the future of a multi-billion dollar industry rests on one company’s rocket program.

A Stray Cosmic Ray Could Sabotage an AI’s Training

The Invisible Threat of a “Bit Flip”

Beyond the visible challenges of launch and cooling, orbital data centers face a constant, invisible threat: space radiation. High-energy particles can cause a “Single Event Effect” (SEE), or “bit flip,” where a single bit of data in a processor or memory spontaneously flips from a 0 to a 1. This is a critical distinction when comparing AI inference (running a finished model) and AI training (building a new one).

A bit flip during inference is like a single typo in a finished book—annoying, but isolated. A bit flip during training is like a typo in the printing press itself, corrupting every subsequent copy. An unnoticed calculation error could silently corrupt a multi-million dollar AI model and compromise the integrity of the entire training run. While Google’s TPUs have proven resilient to the total ionizing dose (cumulative damage over time), they are still vulnerable to these spontaneous Single Event Effects. This makes robust, real-time error correction an absolute necessity for operating in orbit.

The Dawn of Orbital Computing

Moving AI to space is not a choice, but a strategic imperative driven by the hard physical constraints of an energy-hungry digital world. This monumental leap requires radical new approaches that represent the grand engineering challenges of our time, from the physics of vacuum cooling to the microscopic threat of a cosmic ray. The promise is a truly scalable and sustainable future for artificial intelligence, built on adaptable infrastructure that will last for decades.

As we push our digital infrastructure beyond the atmosphere, we must ask a fundamental question: Are we creating the ultimate sustainable solution for AI, or are we simply exporting our insatiable demand for energy to the final frontier?

Hey, great read as always. A balancing act, like advanced Pilate.

The planet is only in danger of we humans getting overheated and blowing it up, not any energy we generate.

To avoid this… America is going hard into space and AI and

off-worlding allows escape from earth’s regulatory and legal gravitational black holes, not saves the planet from too much energy.

If only histrionics could be converted to electricity we’d have an entirely new source of energy, sadly all hysteria can do is consume.